Another late night, another hackathon. This time our team came up second on the iNTU Hackathon podium.

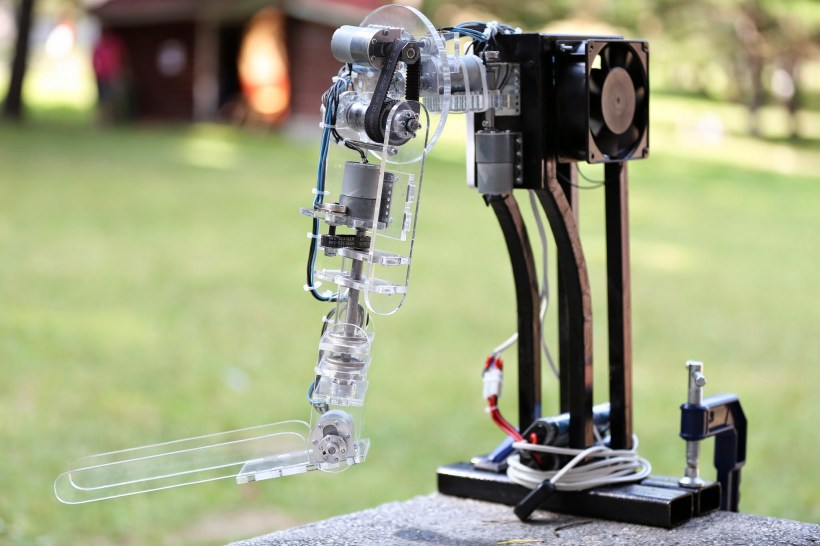

We created an autonomous robotic arm designed to detect and pickup simple objects in the area in front of it. The robot firstly performs a fast scan by rotating an IR distance sensor to detect if there is any objects in front of it. If there is an object, the robot will perform another scan to more accurately estimate the Cartesian coordinates of the object. When it has this information it will attempt to pick the object up with its claw and move it to the trash bin.

This is how it works:

The arm itself has 5 Dynamixel AX-12 servos and one AX-18 providing for 4 degrees of freedom. At its base it has a Sharp GP2D12 sensor. The servos are commanded directly from a laptop running a python script. The script gets input from the Arduino board which digitizes the analog output of the IR sensor. Outlier detection and averaging are used to make the signal more reliable. Furthermore, the signal is processed into distance by making use of interpolating functions that were determined from a calibration test. Distance data is then processed to estimate the size of the object. Location and size is then used by the program to command the arm to grasp and lift the object. In order to achieve this, firstly, a forward kinematic model was validated, followed by the implementation of the inverse kinematic model in a Python function. The claw is 3D printed and adapted from an open source project.

Try it out: GitHub Repo